[Sec Issue] “AI Security Strategy 2026: ‘AI for Security’ vs ‘Security for AI’ — 5 Critical Points Every Security Professional Must Know”

AI Security Strategy 2026: ‘AI for Security’ vs ‘Security for AI’ — 5 Critical Points Every Security Professional Must Know

Why AI Security Strategy Needs to Be Redefined — Right Now

The agenda in CISO meetings has fundamentally shifted. Two years ago, the central question was “How do we mature our ransomware response?” Today, it’s “Is the generative AI we just deployed secure?” and “If attackers use AI against us, can our SOC keep up?” This is the state of AI security strategy in 2026.

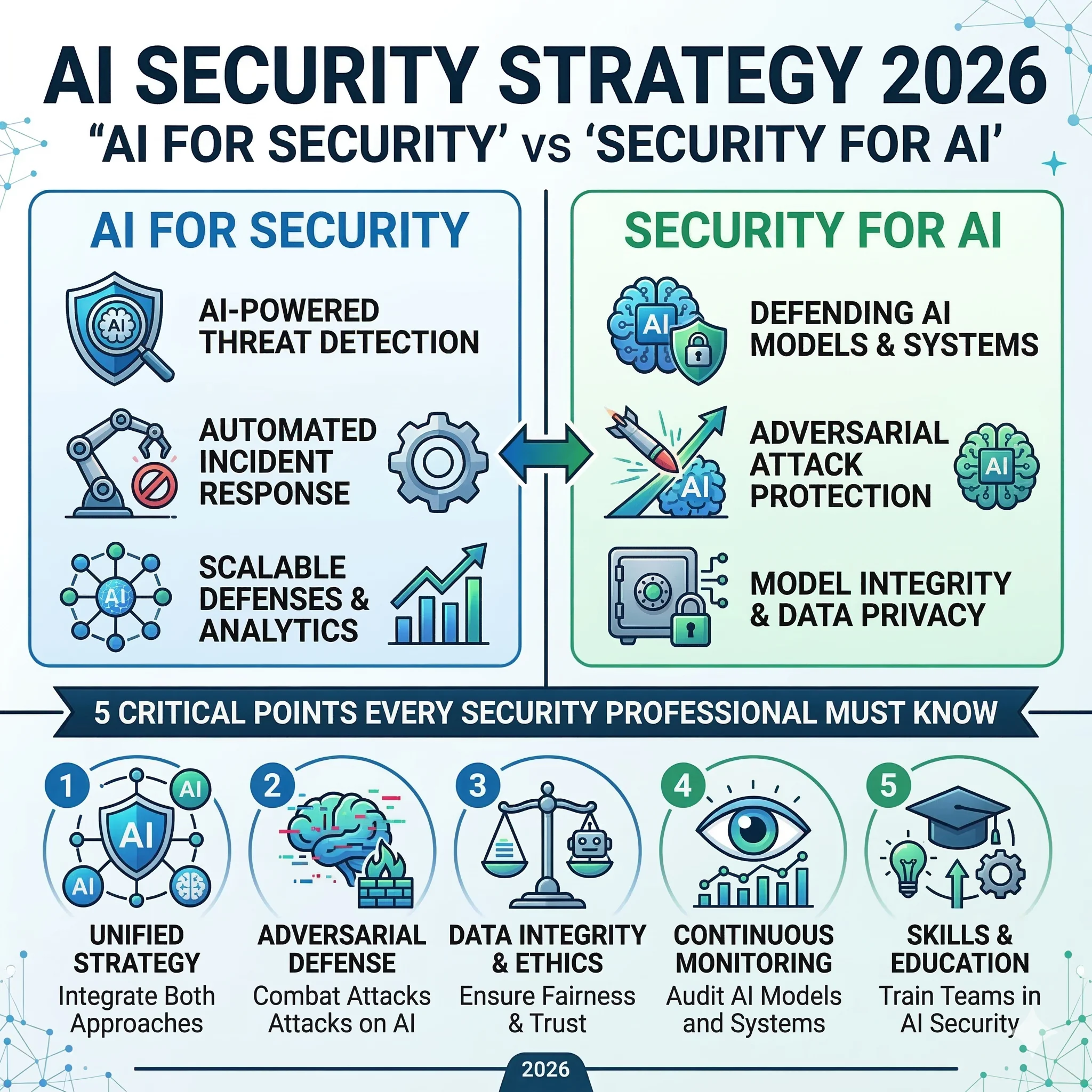

The challenge is that “AI and security” is being used in two entirely different directions simultaneously in practice. One is using AI as a security tool (AI for Security), and the other is protecting AI systems themselves (Security for AI). Failing to clearly distinguish between these two concepts leads to a critical strategic error: concentrating budget and resources in the wrong place.

services market 2024

incl. AI security

OWASP LLM Top 10

agent framework components

Sources: IDC Security Services Market Report (2024), OWASP Top 10 for LLM 2025, Barracuda Security Report (Nov 2025)

Concept Separation: ‘AI for Security’ vs ‘Security for AI’

These two concepts are conflated in practice because they both get labelled simply as “AI security.” But reading the original English framing reveals that their orientations are diametrically opposite. Microsoft’s security blog defines them precisely:

“AI for cybersecurity involves using AI tools to improve an organization’s ability to detect, respond to, and mitigate threats, while security for AI involves protecting AI systems themselves — the strategies and tools designed to defend AI models, data, and algorithms from threats.” — Microsoft Security, ‘What is AI for Cybersecurity’

🛡️ AI as a Security Tool

Purpose: Leverage AI as a means to protect the organization from existing cyber threats

Core question:

“Can AI strengthen our security posture?”

- AI-powered SIEM & SOAR automation

- ML-based anomaly detection (UEBA)

- AI threat intelligence analysis

- Deepfake & phishing detection algorithms

- Automated vulnerability scanning

🔒 AI as the Protected Asset

Purpose: Protect AI systems, models, and data themselves from attack

Core question:

“Are our AI systems actually secure?”

- Prompt injection defense

- Model & training data integrity protection

- LLM agent excessive-permission controls

- AI supply chain security validation

- AI guardrails & red team operations

Sources: Microsoft Security Blog, Red Hat AI Security Guide, OWASP Top 10 for LLM 2025

The distinction in one sentence: AI for Security treats AI as the subject — the actor performing security tasks. Security for AI treats AI as the object — the thing that must be protected. The reality we now face is that failing to pursue both directions simultaneously means AI itself becomes the largest attack surface — a genuine paradox.

in production

& supply chains

targeted campaigns

require response

AI adoption → expanded attack surface → advanced threats → integrated AI security strategy is now a mandatory cycle

Three Realities Facing CISOs and Security Consultants

A consistent pattern emerges when AI security strategy is discussed in enterprise settings. Leadership pushes for accelerated AI adoption. Security teams call for “further review.” But with no framework for what that review should actually entail, the conversation spins in circles. This is the first reality.

- No risk assessment framework for AI adoption

- Absent or immature LLM usage policies and governance

- AI security not yet a separate budget line item

- No formal AI supply chain validation process

- Blind spots in monitoring employee AI usage anomalies

- Limited experience operating AI guardrails and red teams

- AI security gaps within existing frameworks (ISO 27001, SOC 2, etc.)

- Difficulty mapping each client’s actual AI usage footprint

- Need for OWASP LLM & MITRE ATLAS–based assessment capability

- Lack of standardized AI lifecycle security assessment methodology

- No established AI red team penetration testing playbook

- Proactive positioning needed for emerging AI regulations (EU AI Act, etc.)

Sources: CIO, “7 Key AI & Cybersecurity Challenges for 2025”; Samsung SDS, “2026 Cybersecurity Threat Trends”

“Most organizations have not invested sufficiently in any of risk analysis, clear policies, procedures, or technical controls — and are ignoring the scale of security problems that LLMs introduce.” — Joseph Steinberg, Cybersecurity & AI Expert (CIO, May 2025)

The second reality is an asymmetry of pace. Attackers are already using AI to automate and refine attacks. Yet the defensive side’s AI security strategy formation can’t keep up. SecureAI’s 2025 security trend report listed “AI as a double-edged sword — simultaneously expanding both AI-powered threats and AI-powered defenses” as trend number one.

The third reality is a regulatory gap. While the EU AI Act and emerging national AI regulations are in force or taking shape, existing security frameworks (ISO 27001, SOC 2, etc.) have not yet explicitly carved out dedicated AI security controls. For consultants, this gap represents a significant new service opportunity.

5 Critical AI Security Threats to Watch in 2026

Building an effective AI security strategy requires an accurate picture of the threat landscape first. The following five threats are drawn from cross-analysis of OWASP Top 10 for LLM 2025, MITRE ATLAS, and research reports from Samsung SDS and Barracuda Security.

-

01CRITICAL

Prompt Injection

Attackers manipulate natural-language inputs to make an LLM execute unintended commands. Ranked #1 in the OWASP LLM Top 10 continuously since its 2023 debut — and still #1 in 2025. Attacks have evolved to zero-click exploitation via embedded instructions in ordinary business files, as demonstrated by MS 365 Copilot’s “EchoLeak” (CVE-2025-32711).

-

02CRITICAL

Excessive Agency in AI Agents

When LLM agents are granted excessive permissions, misuse or compromise can cascade directly into data exfiltration or system breach. Samsung SDS classifies “over-delegation and abuse of AI agent authority” as a top 2026 threat. Agent memory poisoning attacks execute with deliberate delay — sometimes weeks later — making detection extremely difficult.

-

03HIGH

AI Supply Chain Attacks

The Barracuda Security Report (November 2025) confirmed 43 vulnerable agent framework components introduced via supply chain compromise. Open-source LLM libraries, fine-tuning datasets, and external API plugins all serve as entry points. By the time a breach is detected, backdoors may have been installed months earlier.

-

04HIGH

Training Data Poisoning & Model Corruption

Attackers embed malicious triggers in fine-tuning datasets, causing misbehavior under specific conditions. In RAG (Retrieval-Augmented Generation) environments, indirect poisoning via contaminated external documents is also confirmed. When combined with hallucination — where AI presents incorrect information as fact — legal and ethical risks escalate sharply.

-

05HIGH

AI-Powered Social Engineering (Deepfake Fraud)

In 2025, engineering firm Arup lost $25 million in a deepfake video-call fraud. As audio and video synthesis technology matures, KYC processes and internal approval workflows can be neutralized entirely. CIO warns that “AI-powered digital deception will become normalized from 2025–2026 onward.”

Sources: OWASP Top 10 for LLM 2025, Barracuda Security Report (Nov 2025), Samsung SDS 2026 Security Threat Trends, CIO, Stellar Cyber

7 Strategic Directions for Security Leaders and Consultants

With the threat landscape mapped, the next step is determining the execution direction for an AI security strategy. The following seven directions are drawn from field experience and leading frameworks.

🗂️ Build an AI Asset Inventory

Map which AI tools, LLMs, and agents are running in your environment, with what permissions, connected to what data. Without visibility, there is no control. Integrate an AI asset register into existing IT asset management systems.

📋 Establish AI Usage Policy & Governance

Explicitly define what data can be fed into AI, which plugins are permitted, and what outputs require human review. Proactively incorporate requirements from the EU AI Act, NIST AI RMF, and other applicable frameworks into internal policy.

🔴 Deploy AI Red Teams & Adversarial Testing

Add AI-specific attack techniques (prompt injection, agent manipulation) to existing penetration testing programs. Build an AI red team operational framework using the MITRE ATLAS adversarial tactics catalog as the reference baseline.

🛡️ Implement AI Guardrails & I/O Monitoring

Monitor all LLM input and output in real time and block anomalous patterns. Samsung SDS recommends “applying AI guardrails and enforcing controls across the entire AI lifecycle” as the core 2026 response posture.

🔗 Formalize AI Supply Chain Security

Mandate provenance verification, integrity checks, and license validation for all external LLM APIs, open-source models, and plugins before adoption. Embed AI supply chain security clauses in vendor contracts from the procurement stage.

📊 Extend Existing Frameworks with AI Security Controls

Augment ISO 27001 / SOC 2 / NIST CSF with AI-specific controls covering model protection, LLM access management, and data integrity. Position ahead of anticipated updates to certification standards and regulatory requirements.

🎓 Build an AI Security Awareness Program

Train employees on the security risks of AI usage — including prohibitions on entering confidential data or PII into external models — and run deepfake scenario exercises regularly. Technology matters, but people remain the most exploited vulnerability.

Sources: Samsung SDS 2026 Security Threat Trends, OWASP LLM Security, MITRE ATLAS, NIST AI RMF (2024)

A New Service Frontier Opening for Security Consultants

From a consulting perspective, this shift is simultaneously a challenge and an opportunity. Clients are now asking the question: “Can you assess whether our AI is secure?” Answering that question requires internalizing the OWASP Top 10 for LLM, MITRE ATLAS, and AI lifecycle security assessment methodology. Expanded consulting services — existing ISO 27001 or SOC 2 engagements augmented with AI-specific diagnostic modules, AI red team outsourcing, and AI security policy development — represent the fastest-growing segment of the security advisory market from 2026 onward.

※ Phase timing should be adjusted based on organizational scale and AI adoption maturity

AI Security Strategy: How to Handle a Double-Edged Sword

The “double-edged sword” framing is precise — because AI amplifies the detection capabilities of the defense by an order of magnitude while simultaneously amplifying the attack velocity of the adversary by the same factor. The problem is that too many organizations treat these as separate concerns and, in doing so, end up failing at both.

📌 Key Takeaway: AI for Security means using AI as a tool to enhance threat detection, response, and automation. Security for AI means protecting AI systems themselves from attack. Effective AI security strategy from 2026 onward requires advancing both axes simultaneously — calibrated to the organization’s AI adoption maturity and risk profile.

For enterprise security teams: There is something you can do today. Compile an inventory of every AI tool currently in use within your organization. Map what data is being fed into each. That single action represents half of a solid AI security strategy, already begun.

For security consultants: Prepare for the question that is already landing in client inboxes: “Can you assess our AI?” Internalizing OWASP Top 10 for LLM and MITRE ATLAS is the starting point for that readiness.

The speed of AI-generated threats has already outpaced human-speed review. That is precisely why AI security strategy is not merely a technical problem — it is a governance, policy, and culture problem. Technology teams and compliance functions must work in the same room. Leadership must recognize AI security not as a cost center but as a prerequisite for business continuity. Driving that shift in perception is the most important role a security professional can play in this moment.

- Microsoft Security — What is AI for Cybersecurity?

- Red Hat — What is AI Security? (Security for AI concept definition)

- Samsung SDS — 2026 Cybersecurity Threat Trends and Response

- Samsung SDS — Top 10 Vulnerabilities in Large Language Models (OWASP LLM Top 10)

- CIO — Can AI Actually Help Security? 7 Key AI & Cybersecurity Challenges for 2025

- CIO — LLM Application Security: OWASP & MITRE ATLAS Framework

- CIO — AI Attack Techniques Hiding Commands in Macros (Prompt Injection Evolution)

- Stellar Cyber — Major Agentic AI Security Threats in 2026

- OWASP — Top 10 for Large Language Model Applications (2025 Edition)

- MITRE ATLAS — Adversarial Threat Landscape for AI Systems

- NIST — AI Risk Management Framework (AI RMF 1.0)

- Introl — LLM Security: Prompt Injection Defense Production Guide 2025

- Barracuda Security — AI Supply Chain Security Report (November 2025)

- European Parliament — EU AI Act: First Regulation on Artificial Intelligence

![[Sec Issue] 23 Million Mobile Subscribers’ Data Leaked: The Full Story Behind the 2025 SK Telecom SIM Breach and Future Preparations](https://ota2z.com/wp-content/uploads/2025/04/ChatGPT-Image-2025년-4월-28일-오후-07_46_11-768x512.webp)